I have commenced the long and tedious task of checking the Acorn adjustments of minimum temperatures at various stations by comparing with the lists of “highly correlated” neighbouring stations that the Bureau of Meteorology has kindly but so belatedly provided. Up to 10 stations are listed for each adjustment date, and presumably are the sites used in the Percentile Matching process.

It is assumed by the Bureau that any climate shifts will show up in all stations in the same (though undefined) region. Therefore, by finding the differences between the target or candidate station’s data and its neighbours, we can test for ‘inhomogeneities’ in the candidate site’s data, as explained in CTR-049, pp. 44-47. Any inhomogeneities will show up as breakpoints when data appears to suddenly rise or fall compared with neighbours. Importantly, we can use this method to test both the raw and adjusted data.

Ideally, a perfect station with perfect neighbours will show zero differences: the average of their differences will be a straight line at zero. Importantly, even if the differences fluctuate, there should be zero trend. Any trend indicates past temperatures appear to be either relatively too warm or too cool at the station being studied. It is not my purpose here to evaluate whether or not individual adjustments are justified, but to check whether the adjusted Acorn dataset compares with neighbours more closely. If so, the trend in differences should be close to zero.

In all cases I used differences in annual minima anomalies from the 1961-1990 mean, or if the overlap was shorter than this period, anomalies from the actual period of overlap. Where I am unable to calculate differences for an Acorn merge or recent adjustment due to absence of suitable overlapping data (e.g. Amberley 1997 and Bourke 1999, 1994), as a further test I have assumed these adjustments are correct and applied them to the raw data.

I have completed analyses for Rutherglen, Amberley, Bourke, Deniliquin, and Williamtown.

The results are startling.

In every case, the average difference between the Acorn adjusted data and the neighbouring comparison stations shows a strongly positive trend, indicating Acorn does not accurately reflect regional climate.

Even when later adjustments are assumed to be correct the same effect is seen.

Interim Conclusion:

Based on differencing Raw and Adjusted data from listed comparison stations at five of the sites that have been discussed by Jennifer Marohasy, Jo Nova, or myself recently, Acorn adjustments to minima have a distinct warming bias. It remains to be seen whether this is a widespread phenomenon.

I will continue analysing using this method for other Acorn sites, including those that are strongly cooled. At those sites I expect to find the opposite: that the differences show a negative trend.

Scroll down for graphs showing the results.

Rutherglen

(Note the Rutherglen raw minus neighbours trend is flat, indicating good regional comparison. Adjustments for discontinuities should maintain this relationship.)

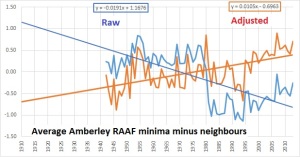

Amberley (a)

(Note that the 1980 discontinuity is plainly obvious but may have been over-corrected.)

Amberley (b): 1997 merge (-0.44) assumed correct

Treating the 1997 adjustment as correct has no effect on the trend in differences.

Bourke (a)

Bourke (b): 1999 and 1994 merges assumed correct.

No change in trend of differences.

Deniliquin

(Note the adjusted differences still show a strong positive trend, but less than the other examples.)

Williamtown

(Applying an adjustment to all years before 1969 produces a strong positive trend in differences.)

Tags: Acorn, adjustments, Bureau of Meteorology

September 18, 2014 at 8:38 am

A 10 station adjustment is clearly ludicrous. Any meaningful, station-specific signal will be lost in the homogenisation.

September 18, 2014 at 11:25 am

This is incredibly important work. Your chart for Rutherglen is almost too bad from the perspective of BOM’s credibility to be true.

September 18, 2014 at 12:19 pm

True it is. After a lot more comparisons we can start drawing some conclusions about (a) detecting discontinuities (b) whether PM over corrects (c) quality assurance or lack thereof.

September 18, 2014 at 6:29 pm

If Rutherglen shows an increase when homogenised with its neighbours then surely the neighbouring stations must show a decrease when homogenised with Rutherglen, unless someone is pulling a swifty.

September 18, 2014 at 6:47 pm

Williamtown is a near-coastal site, likely to have strong temperature gradients and local influences. The adjustment sites listed by the BoM are all other the place, both in terms of distance to the ocean and latitude, often over 100 km away.

My gut feeling is that no set of algorithms can deal with that much complexity, the BoM should have applied some sanity checks and left the raw data uncorrected.

September 19, 2014 at 6:02 pm

Fantastic work Ken. The BOM gets itself deeper and deeper into the doodoo.

Next step is to find out who has been doing the adjustments.

September 19, 2014 at 7:26 pm

[…] this is not science because no methodology has been provided. In fact, when one of my colleagues, Ken Stewart, tested this proposition. He found that the raw data for Rutherglen has a virtually identical trend to its neighbouring […]

September 22, 2014 at 7:56 am

Ken

Just check the new addition to the BoM ACORN site.

http://www.bom.gov.au/climate/change/acorn-sat/#tabs=Early-data

September 22, 2014 at 9:44 am

Wow, 2 new tabs in 2 weeks. They’ll need another row of tabs soon.

The SE Australian paper is a doozy too IMHO.

September 25, 2014 at 6:03 am

Ken, a question for you about the temperature adjustments given in ACORN-SAT-station-adjustment-summary.pdf:

Are the adjustments meant to be cumulative or not? For example, for Rutherglen Tmin, is the adjustment of the raw data in 1965 -0.63 or is it -(0.57 + 0.63)? Actually there are 2 questions, what was intended by the writer of the document, and what has been implemented by BoM in the ACORN-SAT data?

It would be most user friendly to give the TOTAL adjustment of RAW data at each changepoint, so could users and/or BoM data adjusters be getting it wrong?

September 25, 2014 at 8:15 am

Adjustments are cumulative, starting with the most recent and working backwards, adjustments are applied to all previous years. So at Rutherglen the total adjustment to years before 1965 is -1.2, and another -.49 in 1928..

September 25, 2014 at 4:37 pm

When you’re checking things you sometimes come across something interesting so I thought I would bring this to your attention.

Sydney Obs had its warmest May in 2014 (23.2C) and in the Sydney May 2014 summary it mentions 2007 as the previous record (22.7C).

Yet CDO, ACORN and the 2007 Sydney summary for May say that the temp was 22.4C and second to 1958 (22.7C).

I know May 1958 for Sydney has been adjusted down (and 1923 has been adjusted up) in the ACORN data which might make May 2007 second – but I don’t get the extra 0.3C for May 2007 in the 2014 report. Effect???

2014 summary

http://www.bom.gov.au/climate/current/month/nsw/archive/201405.sydney.shtml

2007 summary

http://www.bom.gov.au/climate/current/month/nsw/archive/200705.sydney.shtml

Any ideas?

September 25, 2014 at 6:50 pm

Who knows!

September 28, 2014 at 6:21 pm

Ken above at Amberley you mention the 1997 “Merge”. Any idea what the difference between a “Merge” and a site move is?

September 29, 2014 at 7:27 am

A site move is when a Screen moves some distance with no comparative data being run alongside it. A merge is when a site moves to another site and the two sites overlap so that there is comparative data, or a third local site overlaps both of them.

June 24, 2015 at 4:43 pm

[…] I explained in my post in September 2014, Acorn sites are homogenised by an algorithm which references up to 10 neighbouring sites. A test […]

February 14, 2019 at 11:31 am

[…] others) found to have very many severe problems. (If you like, check these posts, here, here, here, and here. There are many […]

May 20, 2019 at 7:23 pm

[…] others) found to have very many severe problems. (If you like, check these posts, here, here, here, and here. There are many […]