By Ken Stewart, ably assisted by Chris Gillham, Phillip Goode, Ian Hill, Lance Pidgeon, Bill Johnston, Geoff Sherrington, Bob Fernley-Jones, and Anthony Cox.

In the previous post of this series I explained how the Bureau of Meteorology presents summaries of weather observations at 526 weather stations around Australia, and questioned whether instrument error or sudden puffs of wind could cause very large temperature fluctuations in less than 60 seconds observed at a number of sites.

The maximum or minimum temperature you hear on the weather report or see at Climate Data Online is not the hottest or coldest hour, or even minute, but the highest or lowest ONE SECOND VALUE for the whole day. There is no error checking or averaging.

A Bureau officer explains:

Firstly, we receive AWS data every minute. There are 3 temperature values:

1. Most recent one second measurement

2. Highest one second measurement (for the previous 60 secs)

3. Lowest one second measurement (for the previous 60 secs)

Relating this to the 30 minute observations page: For an observation taken at 0600, the values are for the one minute 0559-0600.

Automatic Weather Station instruments were introduced from the late 1980s, with the AWS becoming the primary temperature instrument at a large number of sites from November 1 1996. They are now universal.

An AWS temperature probe collects temperature data every second; there are 60 datapoints per minute. The values given each half hour (and occasionally at times in between) at each station’s Latest Weather Observations page are samples: spot temperatures for the last second of the last minute of that half hour, and the Low Temp or High Temp values on the District Summary page are the lowest and highest one second readings within that minute of reporting. The remaining seconds of data are filtered out. There is no averaging to find the mean over say one minute or ten minutes. There is NO error checking to flag rogue values. The maximum temperatures are dutifully reported in the media, especially if some record has been broken. Quality Control does not occur for two or three months at least, which then just quietly deletes spurious values, long after record temperatures have been spruiked in the media.

In How Temperature is “Measured” in Australia: Part 1 I demonstrated how this method has resulted in large differences recorded in the exact same minutes at a number of stations.

What explanation is there for these differences?

The Bureau will insist they are due to natural weather conditions. Some rapid temperature changes are indeed due to weather phenomena. Here are some examples.

In semi-desert areas of far western Queensland, such as in this example from Urandangi, temperatures rise very rapidly in the early morning.

Fig. 1: Natural rapid temperature increase

For 24 minutes the temperature was increasing at an average of more than 0.2C per minute. That is the fastest I’ve seen, and entirely natural- yet at Hervey Bay on 22 February the temperature rose more than two degrees in less than a minute, before 6 a.m., many times faster than it did later in the morning.

Similarly, on Wednesday 8 March, a cold change with strong wind and rain came through Rockhampton. Luckily the Bureau recorded temperatures at 4:48 and 4:49 p.m., and in that minute there was a drop of 1.2C.

Fig. 2: Natural rapid temperature decrease

That was also entirely natural, and associated with a weather event.

For the next plots, which show questionable readings, I have supplemented BOM data with data from an educational site run by the UK Met Office, WOW (Weather Observations Worldwide). The Met gets data from the BOM at about 10 minutes before the hour, so we have an additional source which increases the sample frequency. The examples selected are all well-known locations in Queensland, frequently mentioned on ABC TV weather. They have been selected purely because they are examples of large one minute changes.

This plot is from Thangool Airport near Biloela, southwest of Rockhampton, on Friday 10 March. The weather was fine, sunny, and hot, with no storms or unusual weather events.

Fig. 3: Temperature spike and rapid fall at Thangool

This one is for Coolangatta International Airport on the Gold Coast on 20th February.

Fig. 4: Temperature spike and rapid fall at Coolangatta

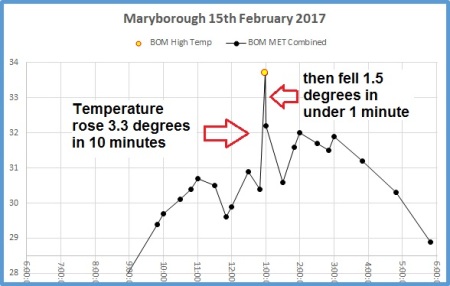

And Maryborough Airport on 15th February:

Fig. 5: Temperature spike and rapid fall at Maryborough (Qld)

Figure 5(b): The weirdest spike and fall: Coen Airport 21 March

Thanks to commenter MikeR for finding that one.

All of these were in fine sunny conditions in the hottest part of the day. It is difficult to imagine a natural meteorological event that would cause such rapid fluctuations- in particular rapid falls- as in the above examples. It is possible they were caused by some other event such as jet blast or prop wash blowing hotter air over the probe during aircraft movement, quickly replaced by air at the ambient surrounding temperature. It is either that or random instrument error. Either way, the result is the same: rogue outliers are being captured as maxima and minima.

How often does this happen?

Over one week I collected 200 instances where the High Temps and Low Temps could be directly checked as they occurred in the same minute as the 30 minute observation.

The results are astounding. The differences occurring in readings in the same minute are scattered across the range of temperatures. Most High Temp discrepancies are of 0.1 or 0.2 degrees, but there is a significant number (39% of the sample) with 0.3C to 0.5C decreases in less than one minute, and five much larger.

Fig. 6: Temperature change within one minute from maximum

Notice that 95% of the differences were from 0.1C to 0.5C, which suggests that one minute ranges of up to 0.5C are common and expected, while values above this are true outliers. The Bureau claims (see below) that in 90% of cases AWS probes have a tolerance of +/-0.2C, whereas the 2011 Review Panel mentioned the “the present +/- 0.5 °C”. Is the tolerance really +/-0.5C?

Fig. 7: Temperature change within one minute from minimum

There was one instance where there was no difference. The vast majority have a -0.1C difference, which is within the instruments’ tolerance.

This next plot shows the differences (temperature falls in one minute from the second with the highest reading to that of the final second) ordered from greatest to least.

Fig. 8: Ordered count of temperature falls

The few outliers are obvious. More than half the differences are of 0.1C or 0.2C.

One minute temperature rises:

Fig. 9: Ordered count of temperature rises

Note the outlier at -2.1C: that was Hervey Bay Airport. Also note only one example with no difference, and the majority at -0.1C.

Is there any pattern to them?

The minimum temperature usually occurs around sunrise, although in summer this varies, but very rarely when the sun is high in the sky. Therefore rapid temperature rise at this time will be relatively small, as the analysis shows: 80% of the differences between the Low Temps and corresponding final second observations were zero or one tenth of a degree, and 91% two tenths of a degree or less. As the instrument tolerance of AWS sensors is supposed to be +/- 0.2C, the vast majority of Low Temps are within this range. Therefore, the Low Temps are not significantly different from the Latest Observation figures. Yet as it is the Lowest Temperature that is being recorded, all but one example have the Low Temp, and therefore daily minimum, cooler than the final second observation. 9% are outside the +/-0.2C range and show real discrepancy, i.e. very rapid temperature rise within one minute, that is worth investigating. Remember, the fastest morning rise I’ve found averaged about 0.2C per minute.

The High Temps have 56% of discrepancies within the +/-0.2C tolerance range. Day time temperatures are much more subject to rapid rise and fall of temperatures. The 44% of discrepancies of 0.3C or more are worth investigation. Many are likely due to small localized air temperature changes, the AWS probes being very sensitive to this, but the rapid decreases shown in the examples above, as well as the rapid rises in the Low Temp examples, mean that random noise is likely to be a factor as well.

Have they affected climate analysis?

Comparison of values at identical times has shown that out of 200 cases, all but one had higher or lower temperatures at some previous second than at the last second of that minute, with a significant number of High Temp observations (39% of the sample) with 0.3C to 0.5C decreases in less than one minute, and five much larger. There is a very high probability that similar differences occur at every station in every state, every day.

In more than half of the sample of High Temps, and over 90% of the Low Temps, the discrepancy was within the stated instrumental tolerance range, and therefore the values are not significantly different, but the higher or lower reading becomes the maximum or minimum, with no tolerance range publicised.

This would of course be an advantage if greater extremes were being looked for.

Nearly 10 percent of minimum temperatures were followed by a rise of more than 0.2C, and 44 percent of maxima were followed by a fall of more than 0.2C. While many of these may have entirely natural causes, none of the very large discrepancies examined had an identifiable meteorological cause. It is questionable whether mercury-in-glass or alcohol-in-glass thermometers used in the past would have responded as rapidly as this. This must make claims for record temperatures questionable at best.

If you think that the +/- 0.2C tolerance makes no difference in the big picture, as positives will balance negatives and errors will resolve to a net of zero, think again. Maximum temperature is the High Temp value for the day, and 44% of the discrepancies were more than +0.2C. If random instrument error is the problem causing the apparent temperature spikes, (and downwards spikes in the hot part of the day are not reported unless they show up in the final second of the 30 minute reporting period), only the highest upwards spike, with or without positive error, is reported. Negative error can never balance any positive error.

Further, these very precise but questionable values then become part of the climate monitoring system, either directly if they are for ACORN stations, or indirectly if they are used to homogenise “neighbouring” ACORN stations. They also contribute to temperature maps, showing for example how hot New South Wales was in summer.

Again, temperature datasets in the ACORN network are developed from historic, not very precise, but (we hope) fairly accurate data from slow response mercury-in-glass or alcohol-in-glass thermometers observed by humans, merged with very precise but possibly unreliable, rapid response, one second data from Automatic Weather Systems. The extra precision means that temperatures measured by AWS probes are likely to be some tenths of a degree higher or lower than LIG thermometers in similar conditions, and the higher proportion of High Temp differences shown above, relative to Low Temp differences, will lead to higher maxima and means in the AWS era. Let’s consider maxima trends:

Fig. 10: Australian maxima 1910-2016

There are no error bars in any BOM graph. Maxima across Australia as a whole have increased by about 0.9 C per 100 years according to the Bureau, based on analysis of ACORN data. Even if across the whole network of 526 automatic stations the instrument error is limited to +/- 0.2C, that is 22.2% of the claimed temperature trend. In the past, indeed as recently as 2011 (see below), instrument error was as high as +/-0.5C, or about half of the 107 year temperature increase. No wonder the Bureau refuses to show error bands in its climate analyses.

There have been NO comparison studies published of AWS probes and LIG thermometers side by side. Can temperatures recorded in the past from liquid-in-glass thermometers really be compared with AWS one second data? The following quotes are from 2011, when an Independent Review Panel gave its assessment of ACORN before its introduction.

Report of the Independent Peer Review Panel p8 (2011)

Recommendations: The Review Panel recommends that the Bureau of Meteorology should implement the following actions:

A1 Reduce the formal inspection tolerance on ACORN-SAT temperature sensors significantly below the present ±0.5 °C. This future tolerance range should be an achievable value determined by the Bureau’s Observation Program, and should be no greater than the ±0.2 °C encouraged by the World Meteorological Organization.

A2 Analyse and document the likely influence if any of the historical ±0.5 °C inspection tolerance in temperature sensors, on the uncertainty range in both individual station and national multidecadal temperature trends calculated from the ACORN-SAT temperature series.

And the BoM Response: (2012)

… … … An analysis of the results of existing instrument tolerance checks was also carried out. This found that tolerance checks, which are carried out six-monthly at most ACORN-SAT stations, were within 0.2 °C in 90% of cases for automatic temperature probes, 99% of cases for mercury maximum thermometers and 96% of cases for alcohol minimum thermometers.

These results give us a high level of confidence that measurement errors of sufficient size to have a material effect on data over a period of months or longer are rare.

This confirms LIG thermometers have more reliable accuracy than automatic probes, and that 10% of AWS probes are not sufficiently accurate, with higher error rates. That is, at more than 50 sites. If they are in remote areas, their inaccuracy will have an additional large effect on the climate signal. It is to be hoped that Alice Springs, which contributes 7-10% of the national climate signal, is not one of them.

Conclusion

It is very likely that the 199 one minute differences found in a sample of 200 high and low temperature reports are also occurring every day at every weather station across Australia. It is very likely that nearly half of the High Temp cases will differ by more than 0.2 degree Celsius.

Maxima and minima reported by modern temperature probes are likely to be some tenths of a degree higher or lower than those reported historically using Liquid-In-Glass thermometers.

Daily maximum and minimum temperatures reported at Climate Data Online are just noise, and cannot be used to determine record high or low temperatures.

These problems are affecting climate analyses directly if they are at ACORN sites, or indirectly, if they are used to homogenise ACORN sites, and may distort regional temperature maps.

Instrument error may account for between 22% and 55% of the national trend for maxima.

A Wish List of Recommendations (never likely to be adopted):

That the more than 50 sites at which AWS probes are not accurate to +/- 0.2 degree Celsius be identified and replaced with accurate probes as a matter of urgency.

That the Bureau show error bars on all of its products, in particular temperature maps and time series, as well as calculations of temperature trends.

That the Bureau of Meteorology recode its existing three criteria filter, to zero-out spurious spikes and preferably send them as fault flags into a separate file in order to improve Quality Control.

That the Bureau replace its one second spot maxima and minima reports with a method similar to wind speed reports: the average over 10 minutes. That would be a much more realistic measure of temperature.

Tags: Australia, bom, temperature

March 21, 2017 at 12:29 pm

A very interesting discovery Ken. Am I correct in presuming that the BOM published daily and monthly mean temperatures would not be affected by these blips?

March 21, 2017 at 4:05 pm

Well that’s what they would say anyway. I would say that it is very likely that these “blips” affect most if not all sites, so they would certainly affect daily and monthly means. The effect is much greater on maxima than minima so means will be increased.

March 21, 2017 at 4:45 pm

Over the whole of my measurement career, there were standard procedures for moving from one instrument or instrument type to another. This was particularly the case in my analytical chemistry phase. Although some were informal, the procedures were carried out by all players that I was familiar with, like second nature, part of the craft of measurement.

Overlap exercises were central to this. They were carried on and on until the transfer functions were defined clearly enough to be useful.

It is hard to believe that the BOM did not conduct similar overlaps. Overlaps comparing LIG with electronic, small shelters with large with beehive, shelter/sensor combinations placed close together to seek systematic differences from micro-location effects — all of these ought to have been done and the results made available. Even down to the effect of placing a thermometer different distances from the back of the shelter, as Bill Johnston notes in emails elsewhere.

Maybe those who have wondered this have asked BOM the wrong questions, or the BOM has interpreted questions in a way that gave answers that did not clarify much.

Have you, Ken, or any reader, actually asked BOM for a compendium of results from overlap exercises, in a few clear words? I have not, so I cannot speculate about what has been done by BOM.

Geoff

March 21, 2017 at 5:33 pm

No I haven’t asked them. A search on their website does not disclose any papers on comparison/overlap studies, nor indeed for wind speed when anemometers were changed. Worth a direct question I guess.

March 21, 2017 at 7:39 pm

I find it difficult to believe that an official body, however unwilling it is to provide /real/ values of effective temperature, could conceive of the one second snap value as being something to be regarded as a proper observation. Presumably these people have some sort of data storage equipment, where values over some minutes could be accumulated and then converted to an average, which would then be stored. My wrist fitness monitor has loads of storage!

Come on, BOM. Get up to date.

(Have I got this all wrong?)

March 21, 2017 at 7:55 pm

Hi Ken,

Another good question to ask the BOM is, why they use 1 second data for maxima and minima. If this is actually the case then this seems ridiculously inappropriate.

From page 114 of ‘The Weather Observer’s Handbook’ 2012.

“it is good practice to sample the air temperature every few seconds but to take a running average over a short period, and to take the highest and lowest (respectively) of the running average samples as the day’s maximum and minimum air temperature. This also helps to iron out minor stray electrical noise or sensor/logger resolution artifacts”.

On the same page of the handbook we find that the WMO recommendation is for an average over 1 minute. A 1 minute running average is used by the UK MMS while the NOAA ASOS system uses 5 minute running averages and USCRN uses fixed 5 minute averages. This averaging is also performed to retain some consistency with previous measurements using mercury thermometers.

Possibly there is a misunderstanding. I strongly suspect that the BOM is using 1 minute running averages of temperatures at 1 second resolution ( 1 second being the sample period).

March 21, 2017 at 10:00 pm

There is more weird stuff going on. Coen Airport is showing today’s maximum temperature as being 32.9C at 2:30pm while the table of today’s temperatures for the same location shows 30.0C at 2:30pm!

The only conceivable explanation for this is that the maximum wind gust ( from the NNE) also happened to occur at exactly 2:30 pm and maybe it brought a gust of hot air from further north? Sounds far fetched but who knows?

March 22, 2017 at 10:15 am

Good morning Mike and good to hear from you again!

As you say, weird!. My colleagues and I have been struggling to get our heads around this for the last month. Thanks for finding the reading at Coen- 2.9C is the largest discrepancy I’ve seen so far. I will do a plot for Coen including WOW data if possible.

Re: a possible misunderstanding as in your comment above: this is a verbatim quote from the BOM officer’s reply to me, when I asked whether the value was for a 1 minute mean or any other length of time:-

“Let me address your specific questions with the example from Hervey Bay.

Firstly, we receive AWS data every minute. There are 3 temperature values:

1. Most recent one second measurement

2. Highest one second measurement (for the previous 60 secs)

3. Lowest one second measurement (for the previous 60 secs)

Relating this to the 30 minute observations page: For an observation taken at 0600, the values are for the one minute 0559-0600.

I’ve looked at the data for Hervey Bay at 0600 on the 22nd February.

25.3, 25.4, 23.2 .”

So there seems to be no doubt. How else could there be 3 different readings for the same minute if they had been averaged? 3 different running means in 60 seconds implies HUGE spikes.

I encourage you to ask them for more clarification through their feedback page at http://www.bom.gov.au/other/feedback/ They’re probably a bit sick of me!

March 22, 2017 at 11:26 am

I’ll post Coen as a new Figure in the post above as it’s so obviously wrong. What took me a while to understand about these discrepancies was that when the High Temp is above the 30 minute observation, this is a temperature DROP. So Coen’s temperature supposedly fell 2.9C in under 60 seconds.

March 22, 2017 at 9:58 pm

Ken, the plot thickens. I am not sure what the time response of the RTD in use by the BOM in their automatic weather stations.

The Weather Observers Handbook quotes a 20 seconds response time for a RTD compared to 1 minute for a mercury thermometer. It also states that the response time of a Stevenson screen ranges from 4 to 20 minutes depending on the wind speed.

In light of this , it seems bizarre to be oversampling to the extent of taking 1 second measurements. Extracting the maximum and minimum from 1 sample is then just asking for trouble. The only way i can see this approach being viable is if the data is quality controlled at some later time. Any data that has a difference between the maximum and minimum that exceeds a threshold value could then be automatically rejected.

It does say on the BOM website that one of the QC criteria is ‘ consistency over time (e.g. is a sudden or brief temperature spike realistic?) ‘ see http://www.bom.gov.au/climate/data-services/content/quality-control.html.

Clearly a 2.9C difference in 1 minute would exceed the threshold and hopefully be picked up at this later time. It would be interesting to see if Tmax for Coen Airport for March 21 is flagged as ‘suspect’ and the value subsequently altered down. If it isn’t then, in my humble opinion, the QC systems at the BOM may need a major overhaul

I will, as you suggest, see if I can get a response from the BOM that clarifies this.

By the way, how did you access the min and max components of the 1 minute Hervey Bay data that you quote above? Is it available somewhere on the BOM website?

Ken, thanks again for your painstaking detective work that has opened this can of worms.

March 23, 2017 at 5:29 am

Hi Mike

That is how QC works I believe.

Thanks for the quotes from the Observers handbook- very enlightening.

The LowTemp and final second values for 0600 at Hervey Bay were from the district summary and latest obs pages; but they gave me all 3 when I asked them – see comment above (and also later for 0500, 0530, and 0630.).

March 23, 2017 at 11:50 am

Hi Mike, you’re out of moderation. Thanks for your recent helpful comments.

Ken

March 25, 2017 at 12:22 pm

In 2013, when Sydney broke its highest daily max of 45.3C (set in Jan 1939), the temp rose from 44.9C to 45.8C in 4 mins and then dropped 1.0C in the next 6 minutes. The old thermometers would not have been able to pick up a sudden surge like that.

March 26, 2017 at 12:45 pm

Hi Ken,

Thank-you for removing my comments from moderation. Much appreciated.

In theory, there is possibly some “method to their madness” if the bureau is using 1 second data to perform a QC check to flag outliers. You stated above that you don’t believe this is the case.

The WMO guidelines (see https://www.wmo.int/pages/prog/www/OSY/Meetings/ET-AWS3/Doc4(1).pdf section 1 (b) page 5) suggest they should be doing this typr of QC.

In that light It will be interesting to see if the anomalous data that you have identified eventually ends up with an ‘N’ in the ‘Quality’ column of the daily temperature data,. Alternatively the recorded Tmin (or Tmax) could be different to what was initially reported .

I did find poking around on the BOM site the following which may be relevant in a historical context.

“Determination of maximum and minimum temperature data from automated monitoring systems has been relatively static since the initial development of the AWS algorithms in 1990. The primary AWS sampling rate is 1 Hz, and mean, maximum temperature statistics are generated from the valid 1 Hz samples over the period of interest. The AWS stores the previous 72 hours of data in the form of statistics for ten minute periods in a circular buffer.”

The term ‘valid 1 Hz samples’ is also particularly pertinent. I think this is where some clarification is needed.

I have a few more comments regarding the QC post processing of the temperature data, I have had a look at the daily Tmin data for both Hervey Bay and Maryborough . I gather the Bureau review the data and convert the ‘N’ to ‘Y’ on a monthly basis. This process seems to be rather haphazard as there is a whole month (December 2014) , that still has ‘N’ for every day of the month.

it is the same for Maryborough and a number of other of other sites. I assume this means this month has not been checked for QC. Why not? Could it be an oversight due to the job shedding and cost cuts announced in 2014 and implemented over the past few years. Who knows? Another question that I will ask the bureau about.

There are other data that are labelled with ‘N’ that are occasionally interspersed inside months that are predominantly marked with a ‘Y’.

For Hervey Bay there are 22 such days marked ‘N’ between 2003 and the start of 2014. This corresponds to about a failure rate of about 0.6%. Comparing this data with that from Maryborough that is only 30 kms away the ‘N’ labelled data seems to be regionally consistent i.e. the differences between the minima at Hervey Bay and Maryborough are typical of the differences for other Tmin data that are marked with a ‘Y in the Quality column’. They do not also seem to be particularly ‘spikey’.

Possibly those marked ’N’ are data points that have been rejected on having too large a disparity between the maximum and minimum on a 1 minute basis.

I think we should suspend judgement regarding the very weird cases that you have identified, and wait a month until the daily data has been QC checked.

If they survive unscathed then I will gladly take up the cudgels.

I do however take issue with your general conclusions regarding the impact of AWS upon the overall temperature record in particular the Tmax data shown in figure 10.

Firstly the methodology he BOM employs appears to be the same since 1990, so this is unlikely to be a significant factor after this time.

Secondly the thermal inertia of the conventional Stevenson screen, which is typically much greater than 4 minutes, will swamp the much shorter response time of both AWS devices and traditional thermometers . Accordingly the effect of the switch over to AWS should be minuscule or non-existent.

Again Ken, thanks for letting me contribute my comments unhindered despite our many differences of opinion.

March 26, 2017 at 4:18 pm

You raise interesting points. I wonder what the criterion for ‘outlier’ would be? We can only speculate. Re: thermal lag. We should perhaps regard the ‘instrument’ as the whole Stevenson screen and its contents, as well as the AWS probe. In which case anomalous up and down spikes must be entirely due to instrument error, if even jet blast cannot make the screen and probe change in less than 4 minutes- and then drop down again in under one minute.

August 7, 2017 at 4:56 pm

[…] (See here and here.) […]

September 1, 2017 at 10:26 pm

[…] One of Australia’s true national treasures, Ken Stewart, was documenting this disaster some months ago – when I was still in denial. One of his blog posts can be accessed here: https://kenskingdom.wordpress.com/2017/03/21/how-temperature-is-measured-in-australia-part-2/ […]

September 6, 2017 at 1:38 am

Hi Ken,

this is great work, and its a scandal this situation exists at all.

From my years as a mine geologist, one of the biggest problems encountered is drillhole and pit floor grade sampling as an estimate of the true orebody grade. Decades have been spent on the statistical treatment of this problem with numerous revisions to the methodology as orebodies are mined and true grades are determined from production records. As a consequence, a specialty science-staistics expertise to deal with this issue has developed in the industry.

It seems inconceivable to me that in regard to temperature measurement the BOM has not taken the basic scientific steps to check this “sampling problem”, especially when meaurements moved from some sort of time-averaged measurement before 1997, to 1 second measurements now.

To my simple mind, some sort of integration over the suggested 5-7 minutues must be used as the measurement basis to make the AWS data comparable to the historical thermometer data?

September 6, 2017 at 2:03 am

Ken, a second comment;

another area of climate science where this change of sampling resolution has already been identified as a problem is atmospheric CO2 measurement.

Modern CO2 data are sampled every minute/hour at the Mauna Loa observatory in Hawaii, and daily-monthly averages are calculated. The pre-direct measurement CO2 comes from proxy records, mainly from ice core data. The ice core data has relatively low resolution of 10 – 100 years.

The splicing of this high resolution data onto the relatively low resolution historical proxy record has given rise to significant questions about the validity of comparing the two data sets.

For instance, a major question has arisen whether the directly measured CO2 rise from 316ppm in 1958 to 414ppm today (over 59 years) would ever be seen in the major peaks in the proxy data from 120kyr, 245kyr, 330kyr and 410kyr ago.

September 6, 2017 at 7:59 am

Good morning Dave

Exactly. And apparently the rest of the world uses averaging but not all the same standard. Why is the BOM out of step?

September 6, 2017 at 7:45 pm

Ken, going back to the mine grade sampling analogy, there were various statistical significance tests which had to be done before a measured value could be used as a valid datapoint in the orebody model. Otherwise it was excluded.

Where is the detailed BOM analysis for these vastly different temperature data sets, before they are used?

September 7, 2017 at 8:23 am

Completely missing. They don;t even keep the data for side by side comparison any more.

September 8, 2017 at 2:17 am

[…] just yesterday the Bureau acknowledge what I have been explaining in blog posts for some weeks, and Ken Stewart since February: that the Bureau is not following these […]

September 14, 2017 at 7:39 pm

[…] ground turbulence so temperatures do not fluctuate from one minute to the next by very much. In How Temperature Is Measured in Australia Part 2 I showed that 91% of low temperatures vary from final second temperatures in the same minute by […]

October 19, 2017 at 7:01 pm

[…] More examples from Ken Stewart here and here […]

October 20, 2017 at 2:01 pm

Very nice work, Ken, thanks. Explains why I see similar spikes at the Broome Airport Site, but guess what? Just 8km down the road, the Broome Port shows none of these spikes at all. Water surrounds it on 3 sides.

October 20, 2017 at 3:49 pm

That’s interesting. At Lady Elliott Island, completely surrounded by water, there are just as many spikes, but not as high as on the mainland.

October 20, 2017 at 8:40 pm

I find this stuff about the BOM to be close to unbelievable. Do they have any access to statisticians at BOM? Or engineers for that matter. Perhaps they should talk to our Met Office for a bit of advice, though even they may not be 100% perfect :-)) Otherwise they should just use a bit of common sense about their measurement and arithmetic techniques.

April 22, 2019 at 9:10 am

http://Angelinawindham.wikidot.com

How Temperature is “Measured” in Australia: Part 2 | kenskingdom